Short Answer

Scaling breaks because a single approver turns leadership into a throughput constraint, converting decision latency into a hidden tax on ARR. When approvals funnel through one person, pipeline velocity, conversion, and GTM timing slow; a seven-day CEO loop on $300k deals in a $50M business compounds into multimillion-dollar annual leakage.

Fix it by redesigning authority:

• map decision-impact bands,

• delegate revenue P&Ls not tasks,

• pre-authorize roughly 70 percent of operational decisions with econometric guardrails,

• and measure decision velocity as a revenue metric so leadership becomes an architect, not a gatekeeper.

The Hidden Revenue Tax: Why Scaling Breaks When Leadership Becomes the Bottleneck

Most founders wear control as proof they care. It looks noble, visible, and oddly satisfying. It also looks like a tax on revenue. When a single human remains the gatekeeper for decisions that materially affect ARR, momentum slows. Velocity dies. Growth stalls between $50 million and $200 million ARR, not because product-market fit failed, but because decisions waited on a person rather than data and delegated authority.

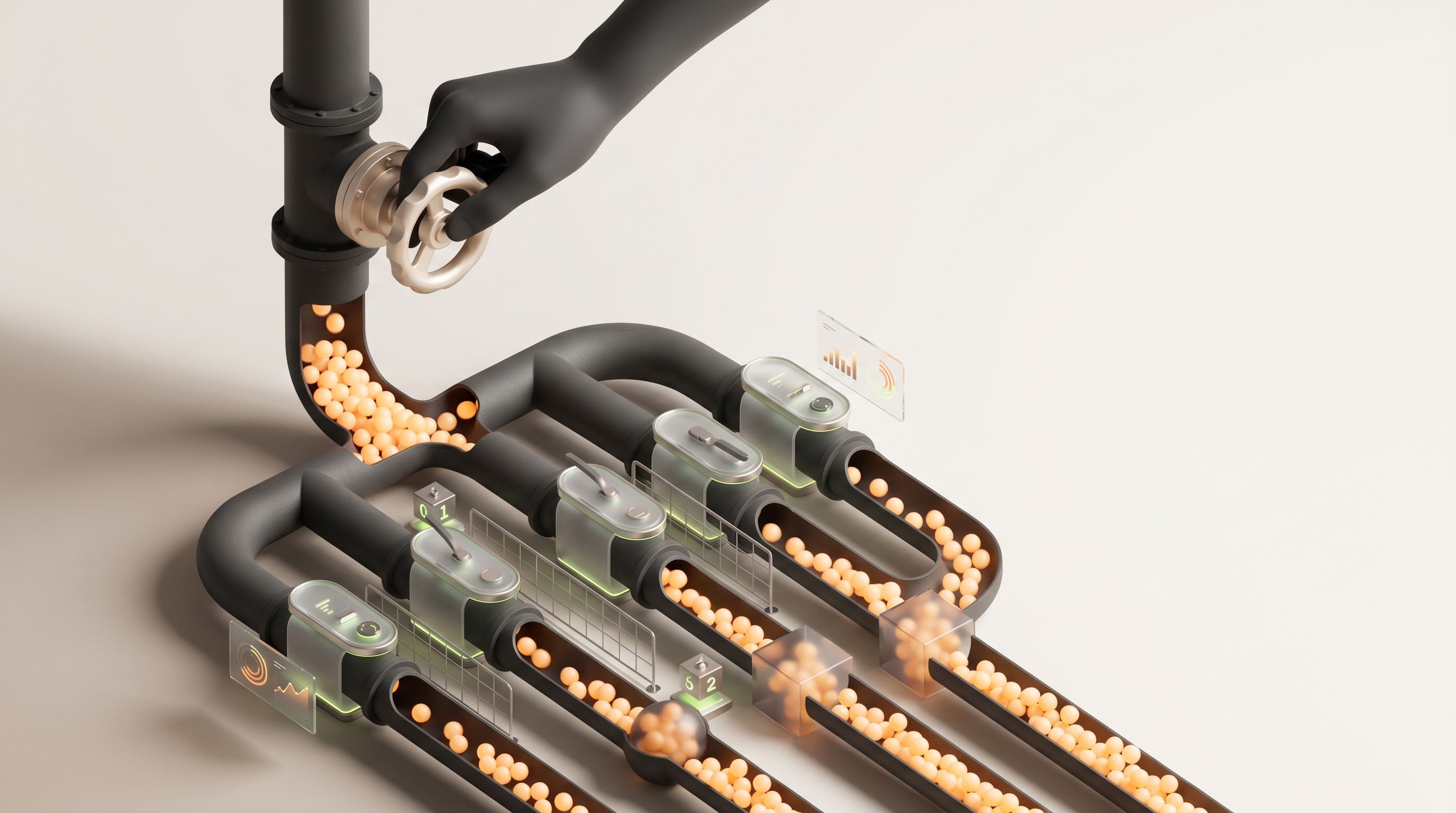

This is not a management problem. It is a throughput problem. Think of leadership as a pipe. If the pipe narrows at the top, all downstream flow is constrained, no matter how wide the channel below is. You do not need better sales reps or more product features to fix the pipe. You need to change who is authorized, how decisions are measured, and what is pre-approved by data.

Why this matters now

Markets are moving faster. AI-powered revenue systems compress planning cycles from quarters to weeks. Competitive intelligence that once took months now arrives in hours. That speed benefits those who decentralize decision rights. It punishes those who keep the tap in one hand.

The result is measurable. Decision latency cuts into pipeline velocity, deal conversion, and timing of go-to-market pivots. A seven-day CEO approval loop on $500k deals compounds into multimillion-dollar annual leakage in a $50 million business. Companies that decentralize authority grow 40 to 60 percent faster year over year than those that do not. The arithmetic is simple, the psychology is not.

Thesis

Scaling breaks when leadership remains a single point of approval because market velocity and organizational scale require decisions to happen where information lives. The leader’s role must evolve from approver to architect. That change is neither abdication nor chaos. It is design. Done well it turns leadership into a multiplier. Done badly it becomes the revenue tax that caps your scale at 2 to 3x and then flatlines.

A framework to stop being the bottleneck

The starting point is not delegation for delegation’s sake. The starting point is revenue-first delegation. You delegate P&Ls, not tasks. The framework below is practical, measurable, and intentionally non-aspirational. It names boundaries and provides guardrails that protect margins and brand while accelerating speed.

1) Map decision impact bands

Every recurring decision that touches revenue should be mapped to an impact band. Use ARR or deal size as the axis. A simple set of bands works in practice:

Tactical, up to $10k impact: frontline owner autonomy, automated reporting

Operational, $10k to $500k impact: segment lead approval, pre-configured guardrails

Strategic, $500k to $10M impact: VP or cross-functional committee, data-backed memo

Capital, over $10M impact: CEO or board

Auto-approve 70 percent of decisions in the Operational band that meet pre-specified signals, such as deal score, margin thresholds, and customer health indicators. That single rule accelerates pipeline velocity with minimal brand risk.

2) Assign revenue P&Ls to leaders, not tasks

Give segment leaders measurable ARR targets, margin responsibility, and scenario budgets. They should own hiring, pricing experiments, and tactical promotion budgets inside their band. This forces decisions to occur where the consequences are paid for. It also gives the CEO fewer approvals and more aggregated signals to act on.

3) Define econometric guardrails

Pre-authorize decisions using straightforward models. Examples:

— Price concessions below X percent only if modeled to keep LTV/CAC above threshold

— Hiring approvals automatic if expected incremental ARR per seat exceeds payback period

— Market entry experiments auto-approved when bottom-up TAM and top-down sizing converge within a tolerance band

Guardrails convert judgment into measurable trade-offs. They keep the system tight without requiring the CEO to intervene for every case.

4) Build AI-Deep Research pods

Data arrives faster than any individual can process. Create small cross-functional pods that own weekly competitive and demand signals. Equip them with agentic research tools that run Monte Carlo scenarios and surface the top three plays weekly. These pods function as shadow leadership. They bring CEO-quality analysis to decisions faster, and they prepare vetted choices for escalation only when variance exceeds a risk threshold.

5) Run leadership stress tests

Use time series and regression on your historical pipeline to simulate different approval latencies. Ask what happens to annual revenue when average decision time moves from 2 days to 7 days for deals in the $100k to $1M range. Those simulations identify where authority reallocation produces the largest marginal benefit.

6) Measure decision velocity directly

Create a dashboard that reports decision latency by band, owner, and outcome. Track:

— Median approval time per band

— Pipeline conversion delta before and after approval

— Revenue leak attributed to delayed decisions

Treat improvements in decision velocity as a revenue metric. Tie compensation and bonuses to it where appropriate.

How this actually increases revenue

Delegation without measurement looks like abdication. Measurement without delegation looks like control. Both fail. When you do both together, three things happen.

First, pipeline velocity increases. Reps convert faster when they can negotiate within known guardrails. Smarter guardrails means fewer escalations and faster closes.

Second, product and go-to-market pivots happen sooner. Markets are signaling changes faster. When segment leaders can act on weekly research pods, you do not miss seasonal inflection points or competitor moves.

Third, the CEO’s time compounds differently. Instead of signing off on deals, the CEO spends time removing constraints, reallocating capital across segments, and designing new authority lattices. That design work scales, it does not consume.

A concrete example

Consider a $50 million ARR company with an average deal in the $300k range. If the CEO insists on approving all deals above $250k and the average approval loop is seven days, the company loses time-to-close and suffers higher churn because customers move on or choose faster vendors. Regression models show that a one-week delay on the high-mid funnel reduces annual revenue by millions. If the company introduces an Operational band with pre-approved conditions for 70 percent of these deals, decision latency drops to under 48 hours and annual revenue increases materially, all without the CEO signing more documents.

Trade-offs and failure modes

There is risk. Delegation without guardrails creates margin erosion and brand risk. Overly tight guardrails replicate the bottleneck in a different form. CEOs also fear loss of control. That fear is real and functional. The answer is not removal of control, but redistribution of accountability.

Manage trade-offs this way:

— Start with narrow bands, then widen once outcomes stabilize

— Make exceptions visible, not secret. Track every escalated decision and publish the rationale

— Use econometric ceilings on concessions and automated audit trails for pricing

— Build compensation that rewards long-term ARR contribution, not just short-term closes

How elites do it differently

Top performers do three things most companies do not. They pre-authorize scenarios, they run shadow leadership, and they measure decision velocity as rigorously as CAC and churn.

Pre-authorizing scenarios means you design the most common 80 percent of decisions ahead of time with a set of outcomes and a path to escalation. It is the difference between asking permission and following a code.

Shadow leadership uses AI-augmented deputies who run Monte Carlo scenarios and present the top plays before a decision is needed. That preserves authority but moves the bottleneck away from a single person.

Finally, elites benchmark decision velocity externally. They compare their approval times to competitors and treat a lag as a strategic vulnerability. If your competitor can pivot in weeks and you pivot in quarters, you are going to lose market share even if your product is equal.

Practical 90-day plan to remove yourself as the bottleneck

Week 0: Map the decisions

— Inventory every recurring decision that touches revenue

— Assign estimated impact in dollars or ARR exposure

Week 1 to 3: Define bands and guardrails

— Create the bands described earlier

— Build simple econometric rules for each band

Week 4 to 6: Pilot Operational band autonomy

— Select one segment or geography

— Auto-approve 70 percent of deals meeting the guardrails

— Run weekly AI-Deep Research pods to support the segment

Week 7 to 12: Measure and iterate

— Track decision latency, pipeline velocity, and revenue delta

— Publish exception logs for all escalations

— Expand bands where outcomes meet targets

After day 90: Institutionalize

— Convert rules into product flows and salesforce automation

— Tie a portion of leadership compensation to decision velocity improvements

— Run quarterly Monte Carlo war games

Final point of view

Founders who cling to approvals tell themselves they are protecting the company. Often they are protecting the past. If revenue is the lifeblood and leadership is the valve, stop sitting on the valve and start redesigning it. Treat decisions like flows, not trophies. Architect authority. Delegate P&Ls. Measure rigorously. Use AI where it accelerates clarity. The result is not loss of control. It is compoundable throughput.

They have a you problem. Now create the system that makes it someone else’s problem, with better outcomes and measurable upside.

Frequently Asked Questions

How can I tell if leadership approval is actually costing us revenue?

Look at approval latency, deal aging, and concentrated approval points by owner. Run a regression simulation that models revenue leakage as approval time stretches, and compare pipeline velocity before and after a single-person approval loop. If conversion drops and time-to-close climbs in high-impact bands, leadership is a tax not a safeguard.

What is the fastest way to map decision impact bands in my company?

Inventory every recurring revenue decision, then tag each with estimated ARR exposure or deal-size impact and frequency. Use simple thresholds to create bands, assign an owner to each band, and validate with the teams that execute those decisions. Start narrow, iterate on thresholds once you have real outcome data.

How do I design guardrails that preserve margin while giving autonomy?

Convert judgment into econometric rules, for example permit concessions only when modeled to keep LTV/CAC above a set threshold and cap discount percentages by segment. Add automated audits and exception logs so every outlier is visible and reviewed. Tighten or loosen the rules based on measured margin impact, not anecdote.

What percentage of Operational band decisions should be auto-approved?

Aim to auto-approve roughly 60 to 80 percent of Operational band cases that meet signal criteria, but start at a more conservative rate to build trust. Measure outcomes weekly and expand auto-approval as margin and retention metrics prove stable. The goal is velocity without sacrificing customer economics.

How should I structure revenue P&Ls for segment leaders, practically speaking?

Give each segment leader an ARR target, margin responsibility, and a scenario budget for experiments and hiring within predefined payback rules. Let them own tactical promotions, pricing experiments, and low-to-mid value deal approvals inside their band. Hold them accountable with a dashboard that shows contribution to ARR, margin, and decision velocity.

How do I build an AI-Deep Research pod and what should it deliver weekly?

Assemble a small cross-functional team with sales ops, product strategy, and competitive intel, equip them with agentic analysis tools, and charge them to run Monte Carlo scenarios on key signals.

They should surface the top three plays each week with expected upside and risk. That output becomes the vetted input for decisions and reduces escalations.

What does a leadership stress test look like and what metrics matter?

Simulate historical pipeline performance while varying approval latency by band, then measure the impact on time-to-close, conversion rate, and annualized revenue. Focus on deals in the high-mid funnel where delays compound into churn or lost opportunity. Use the results to prioritize which authority reallocations yield the biggest marginal revenue lift.

Which dashboards and tools are non-negotiable to measure decision velocity?

You need a decision-velocity dashboard that shows median approval time by band, pipeline conversion delta pre and post approval, revenue leak attributed to delays, and an exception log. Tie those views into your CRM and finance system so P&Ls and decision metrics align. Automate alerts for bands that exceed agreed latency thresholds.

What are the main failure modes when decentralizing authority and how do I prevent them?

The main risks are margin erosion, brand inconsistency, and hidden exceptions that bypass oversight. Prevent them by starting with tight bands, running automated audits, publishing every exception rationale, and using econometric ceilings on concessions. Also align compensation to long-term ARR so leaders do not trade future revenue for fast closes.

How do I pilot this framework in 90 days without breaking deals?

• Week 0 — map decisions

• Weeks 1 to 3 — define bands and guardrails

• Weeks 4 to 6 — pilot a single segment with 70 percent auto-approval within the Operational band

• Weeks 7 to 12 — measure latency, pipeline velocity, and revenue delta

Keep the pilot scope limited, publish exception logs daily, and rollback rules quickly if margins slip. Expand only after data proves outcomes.

How should compensation change to reward decision velocity without promoting short-termism?

Tie a portion of leadership compensation to long-term ARR contribution, adjusted for churn and margin impact, not just immediate closes. Mix velocity metrics with retention and post-close NPS or product adoption indicators. Use deferred bonuses that vest based on 6 to 12 month outcomes to align incentives.

When is a decision worthy of CEO or board escalation?

Escalate when the modeled capital impact exceeds your Capital band threshold, when outcomes fall outside econometric tolerance bands, or when there is material reputational risk. Also escalate if Monte Carlo scenarios show outsized downside that cannot be mitigated at the segment level. Otherwise, require clean data-backed memos, not gut asks.

How do I benchmark our decision velocity against competitors and why does it matter?

Use competitive intelligence pods to map competitor cycle times, survey win timelines, and compare approval latency in matched deal sizes. Treat any lag as a strategic vulnerability, because faster pivots win market share even with comparable product. Set explicit target deltas and monitor them as part of your go-to-market scorecard.

Key Takeaways

• Treat leadership as a throughput constraint, not a management badge; single-person approval creates a measurable revenue tax that commonly caps scale between $50 million and $200 million.

• Map every recurring revenue decision into impact bands tied to ARR or deal size, and auto-approve roughly 70 percent of the Operational band when predefined signals and guardrails align.

• Delegate P&Ls to segment leaders with clear ARR and margin targets so decisions are made where consequences land, reducing CEO approvals to aggregated signals and exceptions.

• Replace discretionary judgment with econometric guardrails, for example, allow price concessions only if modeled LTV/CAC stays above threshold and auto-approve hires that meet payback criteria.

• Create AI-Deep Research pods that run weekly Monte Carlo scenarios and surface the top three plays, serving as shadow leadership that short-circuits unnecessary escalations.

• Measure decision velocity as a revenue metric via dashboards that report median approval time by band, pipeline conversion delta, and revenue leakage, and tie a portion of leadership comp to improvements.

• Execute a 90-day plan: inventory decisions, define bands and guardrails, pilot Operational autonomy in one segment, measure revenue impact, then convert rules into product flows and comp structures.