Short Answer

Pressure doesn't create clarity, it exposes whether your revenue architecture had clarity to begin with.

Treat pressure as a litmus: install Reveal, Validate, Architect systems—

• Reveal — weekly AI diagnostics on CRM and conversations

• Validate — 30–90 day revenue-tied micro-experiments

• Architect — productized plays gated by research oversight

Do that and capture rates, pricing premiums, and scalability become compoundable; ignore it and you will be chasing share with blind confidence.

Pressure doesn’t create clarity. It exposes whether clarity existed all along.

That distinction matters. Under stress, markets stop being polite. Windows shrink. Buyers become choosier. When revenue falls short, leaders rarely discover a new truth. They discover the gaps between what they assumed and what the market actually buys.

In 2026 the effect is amplified. AI turns every CRM note and sales conversation into a live diagnostic. Competitive shifts happen faster. Addressable markets compress in places. The organizations that scale are not luckier. They had clarity built into their architecture before the storm hit. The ones that fail relied on plausible stories and hope.

Thesis

Treat pressure as a litmus test, not a teacher. Build the systems that reveal truth early, validate assumptions quickly, and reallocate capital where the math and behavior align. Do that and pressure stops being an existential threat. It becomes a throughput multiplier.

What pressure actually reveals

1. Demand is real or imagined. Pressure strips away polite buy signals. When pipelines dry, you see which buyer profiles were fantasies and which reflected real willingness to pay.

2. Competitive wiring is present or absent. Under stress, rivals exploit small edges. If your positioning is shallow, price and features will be your undoing.

3. Operational debt exists or does not. Teams that scale have playbooks, data flows, and governance. Fragile operations discover these gaps the hard way.

Those revelations map directly to revenue outcomes. When clarity is present, capture rates rise 20 to 40 percent, pricing premiums become sustainable, and scalability increases 2 to 3 times. When clarity is absent, conversion collapses and TAM estimates were twice too optimistic.

A surgical framework to make clarity non-negotiable

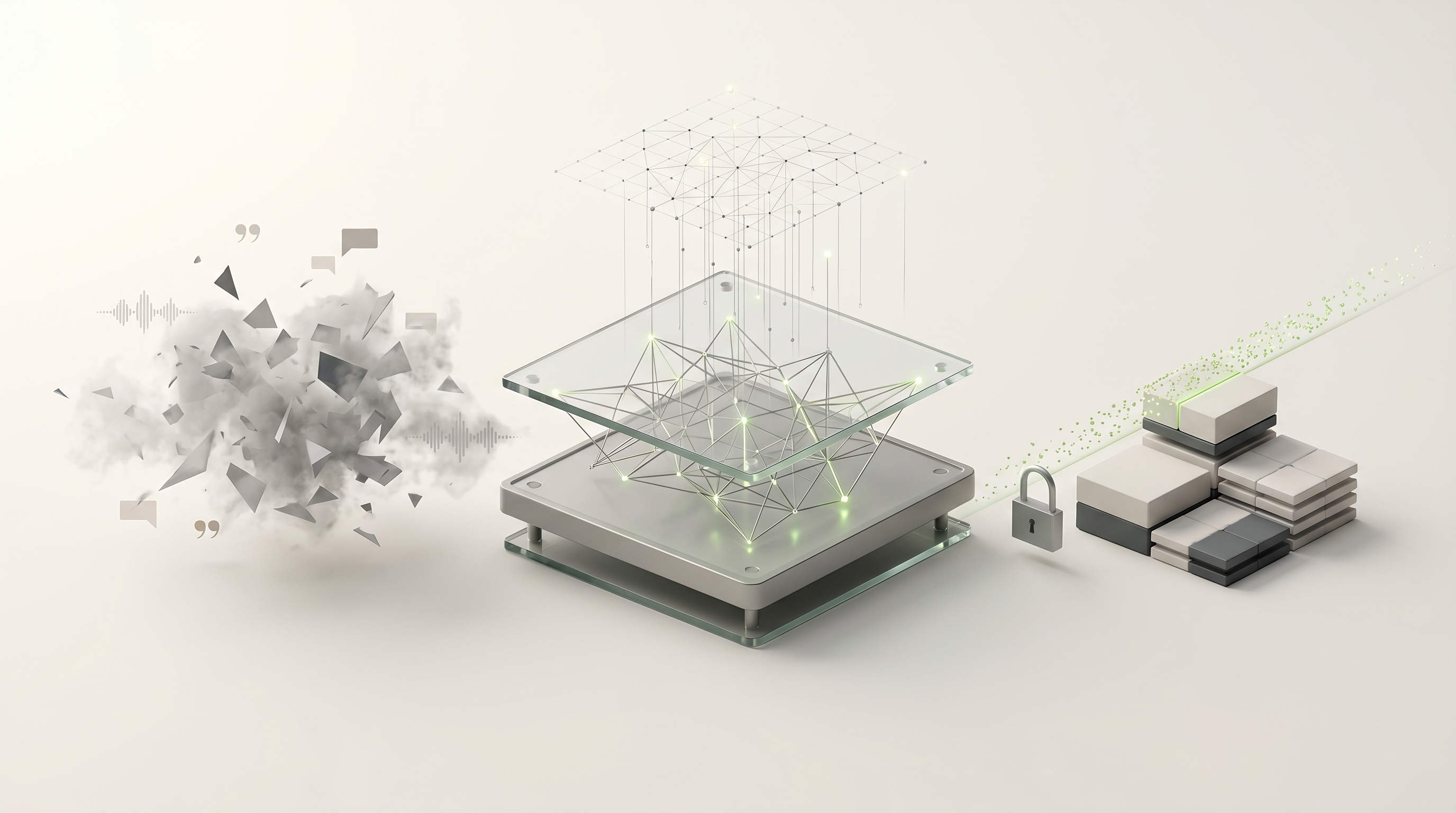

I use a three-part architecture: Reveal, Validate, Architect. Each step is a system, not an occasional exercise.

Reveal, systematically

Purpose: Turn pressure signals into diagnostic data before they become crises.

Practices:

— Weekly AI deep research on CRM and conversation data. Let AI surface closed-lost patterns, churn triggers, and feature requests. Target outcomes: 20 percent churn reduction and $2 to $5 million recaptured in enterprise segments where conversations reveal recoverable opportunities.

— Benchmark five to ten competitors on pricing, positioning, and product gaps, and validate those gaps with customer surveys. You will find white spaces that competitors ignore. Those white spaces accelerate penetration by roughly 25 percent.

— Run a hybrid TAM every quarter. Combine bottom-up math, ACV times addressable company counts, with top-down industry signals. This keeps your allocation honest, and prevents spending into inflated markets.

Why it matters: Reveal removes plausible deniability. You either have buyers who behave predictably, or you do not. The data cannot be optimistic for you.

Validate, fast

Purpose: Convert revealed patterns into investment decisions or pruning.

Practices:

— Micro-experiments tied to revenue metrics. Test pricing, value props, and sales plays in 30 to 90 day cycles. Accept small wins and scale them. Kill slow bets fast.

— Behavioral segmentation over demographics. Shift creative and pitch scripts to decision triggers. Teams that do this lift conversions by about 35 percent.

— Pressure scenarios monthly. Simulate a 20 percent funnel contraction, a price war, or a key region becoming saturated. Score each product and GTM motion on survivability and upside.

Why it matters: Validation is the difference between pivoting because the market told you to, and pivoting because you felt anxious. You do not chase share in zero-sum markets. You weaponize competitor weaknesses identified by signal intelligence.

Architect, mercilessly

Purpose: Lock clarity into systems so pressure reveals progress, not failure.

Practices:

— Revenue intelligence dashboard. Merge competitive signals, churn predictors, and TAM movement. Make it the dashboard leadership uses weekly. It scales focus and prevents hero-wins from masking systemic leakage.

— Oversight-gate every strategic spend. No reallocation, launch, or hire above a threshold without research validation. This reduces blind expansion inefficiency by roughly 50 percent.

— Productized experiments. Turn winning plays into repeatable modules that sales and marketing can deploy without a bespoke rebuild.

Why it matters: Architecture converts tactical wins into compoundable throughput. Without it, every success is one-off and fragile under the next shock.

Contrarian moves the winners make

Do not default to market share wars. In compressed markets, share is zero-sum. Instead:

— Weaponize indirect competitors. Use AI to analyze their conversation data for churn signals. Preempt their customers with offers that address the small but decisive nuisances no one else solves.

— Hunt third-order gaps. The first-order gap is product fit. The second is pricing. The third is plumbing, distribution, or regulation quirks in regions or verticals. These yield outsized ROI because few teams are looking there.

— Make research governance the executive function. Top performers require management sign-off on hypotheses with evidence, not opinions alone.

Short case, concrete math

A mid-market SaaS vendor saw a pipeline drop after a competitor launched a feature. The immediate reaction among the team was price cuts. Instead, they ran weekly AI scans of lost deal conversations and found a pattern. Buyers were leaving not for the feature, but for implementation speed and bespoke onboarding.

Actions taken:

— Launched a productized fast-start package priced 15 percent higher than baseline, with a 30 day implementation SLA.

— Trained a small team to deliver the package and embedded it in the sales sequence.

— Re-ran hybrid TAM to re-allocate budget to the vertical where implementation speed mattered most.

Result in six months: capture rates rose 30 percent in targeted segments, pricing held, churn dropped, and the vendor recaptured $3.8 million in revenue that would have been lost to discounting.

A 90-day operating cadence to install clarity

Week 1 to 4: Baseline. Run AI deep research on last 12 months of closed-lost and churn. Publish a one page diagnosis with three prioritized gaps.

Month 2: Validate. Run two micro-experiments tied to revenue. One pricing variant. One delivery or onboarding variant. Use control groups and revenue metrics only.

Month 3: Architect. Lock the winning play into a productized module. Build the revenue intelligence tile that tracks it. Create an oversight gate protocol for any new GTM spend.

If you do nothing else, enforce the oversight gate. Require a research brief for any new initiative above a defined spend. That alone prevents half of the common misallocations I see in scaling companies.

Final clarity

Pressure does not give you ideas. It reveals whether the ideas you already had were real. Winners design for that revelation. They build research, experiments, and governance into the revenue stack so stress reveals progress, not failure.

If you want your next cycle to compound, make clarity the infrastructure. Make research routine. Make decisions evidence driven. Then pressure stops being a hazard. It becomes the quality control your business needed all along.

I work with operators who have already built machines and want them to multiply. My work is not coaching. It is architecture. If your organization is discovering uncomfortable truths under stress, that is useful. The better move is to stop waiting for pressure and install the systems that reveal truth earlier, while the consequences are still optional.

Frequently Asked Questions

How do I run a weekly AI deep research process on CRM and sales conversations without swamping my team?

Automate the heavy lifting: have AI tag and summarize closed-lost themes, churn signals, and feature asks, then surface a one-page diagnostic for leadership each week.

Limit human review to a small cross-functional squad that triages high-impact patterns and assigns 1 to 2 action owners.

That keeps cadence tight, avoids noise, and converts signal into immediate micro-experiments.

What metrics should my revenue intelligence dashboard track to reveal clarity under pressure?

• Capture rate

• Win/loss drivers

• Churn triggers

• ACV movement by segment

• Competitor signal frequency

• A survivability score per product

• A signal-to-noise ratio for incoming leads

Normalize metrics week over week, keep visuals simple, update weekly, and make the dashboard the single source for allocation decisions.

How do I structure an oversight-gate so it actually stops wasteful expansion without slowing the business?

• Require a short research brief that ties expected outcomes to revenue metrics

• Include a risk score

• Require a two-step validation plan before any spend above the threshold

• Set fast review SLAs

• Assign a decision owner

• Allow conditional approvals with built-in experiments

The gate should block opinion-led spending and force evidence before you scale.

When should I run a hybrid TAM and how often is too often?

Run a full hybrid TAM quarterly for strategic allocation, and a lighter check monthly focused on any segment moving more than 10 percent in addressable counts or ACV.

Quarterly gives disciplined capital planning; monthly keeps you honest to market shifts. Doing it ad hoc only when things go wrong means you are reallocating defensively, not proactively.

How do I design micro-experiments that actually move revenue in 30 to 90 days?

• Pick a single revenue metric

• Isolate one variable

• Run the test against a control with statistically meaningful cohorts

• Keep hypotheses tight and limit scope to tactical channels or segments

• Predefine success thresholds tied to lift and cost of scale

If it wins, allocate incremental budget immediately; if not, kill it and document why.

What are the main trade-offs of turning a winning play into a productized module?

Productizing accelerates repeatability and margin, but requires upfront investment in documentation, training, and delivery tooling which can slow short-term velocity.

The trade-off is between one-off revenue wins and predictable, compoundable throughput. Plan for a three to six month break-even on build costs and enforce version control so modules stay tight to the revenue signal.

How should startups prioritize whether to fight for market share or hunt third-order gaps?

If the market is zero-sum and margins compress, deprioritize raw share grabs and target third-order gaps that competitors ignore.

• Onboarding friction

• Regulatory quirks

• Distribution plumbing

Those gaps deliver higher ROI per dollar and are less likely to trigger a price war. Use a survivability and upside score to make the call and move resources to the highest expected revenue-per-dollar channel.

What does a practical pressure scenario simulation look like, and how do leaders use the results?

Simulate a defined shock, then rerun your revenue model to see impact on CAC, LTV, and cash runway over 90 to 180 days.

• Examples: a 20 percent funnel contraction, a regional saturation, or a competitor feature launch

Score each product and GTM motion on survivability and upside, then convert the top mitigations into prioritized experiments or budget reallocations. Use the simulation as a rehearsal, not a panic button.

How do you spot when buyer profiles in your pipeline are fantasies, not real revenue sources?

• Long cycles with no conversion

• Repeated feature asks with no purchase behavior

• High engagement from accounts that never match decision-making roles

If those accounts consistently fail micro-experiments or show low willingness to pay, mark them as low-probability and deprioritize. Reallocate outreach to segments where ACV and close velocity align with purchased intent.

Is it ethical and legal to analyze competitors' conversation data with AI, and how should teams approach this?

You're fine using public signals and your own win/loss conversation data, but avoid scraping private communications or personal data that violates terms or privacy laws.

• Use AI to synthesize public reviews, case studies, and anonymized signals from your CRM

Frame outreach as problem-solving offers that address observed pain points, not as opportunistic poaching.

When should a team stop doubling down on a slow bet and cut it, based on the Reveal/Validate/Architect framework?

• If a micro-experiment fails to meet predefined revenue lift thresholds after two clean cycles

• If hybrid TAM and competitive benchmarks show an addressable market less than half of initial estimates

Lock that decision into oversight-gate rules so cutting slow bets is routine, not emotional. Redeploy capital to validated plays with clear scaling paths.

What short-term KPI improvements should leaders expect after installing clarity systems?

• An increase in capture rates of 15 to 40 percent

• A reduction in churn signals by around 20 percent

• A two to three times improvement in scalable throughput for repeatable plays

You will also see faster, cheaper validation cycles and fewer emergency discounting decisions. Those shifts compound quickly because decisions become evidence-driven instead of hope-driven.

Key Takeaways

• Treat pressure as a litmus test, not a teacher, build systems that reveal whether buyers, pricing, and operations are real before you reallocate capital.

• Install weekly AI analysis on CRM and conversation data, plus a quarterly hybrid TAM, to expose closed-lost patterns, churn triggers, and inflated addressable markets, aiming to cut churn about 20 percent and recapture $2 to $5 million in enterprise opportunities.

• Enforce a research-validated oversight gate for any hire, launch, or reallocation above a defined threshold, reducing blind expansion inefficiency by roughly 50 percent.

• Run 30 to 90 day micro-experiments tied solely to revenue metrics, scale small wins fast, and kill slow bets decisively so capital follows evidence and not narratives.

• Productize winning plays into repeatable modules embedded in the sales sequence, converting tactical wins into compoundable throughput rather than one-off heroics.

• Shift segmentation to behavioral decision triggers and weaponize indirect competitor conversation intelligence to preempt churn and lift conversion rates by around 35 percent.

• Hunt third-order gaps such as implementation speed, distribution plumbing, or regional regulatory frictions, these low-attention edges preserve pricing and yield outsized ROI without entering zero-sum share battles.