Short Answer

No. Motivation is a short-lived variance booster, not a scaling lever; it caps revenue, raises churn, and masks systemic leaks.

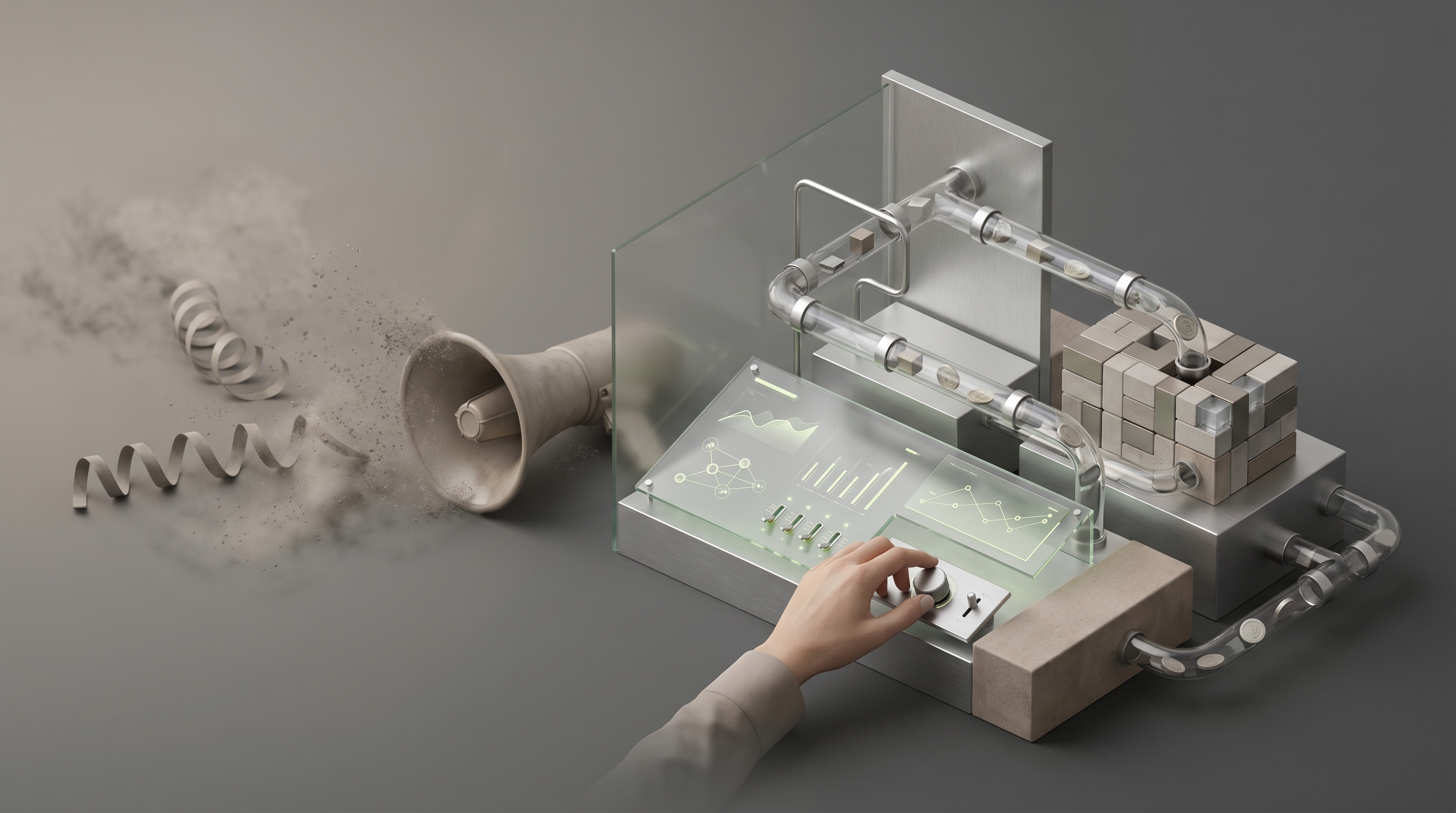

Replace rallies with revenue architecture —

• predictable GTM dashboards

• weekly AI market scans

• regression-based pricing

• retention loops

• role-aligned hiring

Reallocate at least 30 percent of discretionary culture spend to systems and experiments and expect measurable gains in the 3 to 15 percent range, up to 15 percent lower churn, and a 20 percent tighter forecast error within quarters.

Motivation is seductive. It feels immediate, visible, cheap. Leaders rally a team, morale spikes, LinkedIn applause follows. But that feeling is not a strategy. It is a short-lived energy input applied to a long-term engine, and when pressure arrives the energy evaporates, decisions revert to habit, and revenue stops compounding.

Thesis

Motivation as a scaling strategy caps revenue, increases churn, and hides systemic weaknesses. In 2026, when AI and data systems deliver measurable revenue uplifts, relying on human willpower is an avoidable constraint. Operator evidence shows motivation-dependent organizations underperform peers by 20 to 30 percent, experience up to 25 percent higher sales churn, and persistently misallocate investment into pep experiences instead of scalable levers.

Why this matters now

The market moved. GenAI, predictive analytics, and automated GTM systems are no longer experimental. Firms that run weekly four-perspective market scans, automate pricing elasticity models, and instrument cross-functional KPI dashboards are finding low-friction revenue gains in the 3 to 15 percent range, sometimes more. Those gains are predictable, auditable, and repeatable. Motivation delivers none of that. It is stochastic. It is costly. In a world where predictable minor lifts compound into material outcomes, unpredictability is the true tax on growth.

A contrarian clarity

Most leaders mistake motion for progress. Pep talks change tone, not throughput. High performers swap motivational rituals for architecture, they trade rallying cries for systems that force the right behaviors without rallying. That shift is not moral. It is mathematical. Revenue compounds when the system routes every lead through the highest-probability path, when pricing changes are tested, and when churn drivers are measured. Motivation obscures those levers because it feels like leadership. It is often the founder's ego tax, a cosmetic fix that postpones the real work.

The revenue architecture lens

Treat scaling as an engineering problem. There are five architectural pillars that determine whether growth compounds or stalls.

1. Predictability, not pep

Predictability is the capacity to forecast revenue paths within a narrow band. It requires clean inputs, repeatable processes, and measurable outputs. Motivation increases variance, not central tendency. Replace transient rallies with daily incentives embedded inside workflows, outcome-based SLAs, and micro-triggers in your CRM that require data, not optimism, to progress a deal.

2. Throughput over theatrics

Throughput is how many qualified opportunities move end to end per unit of time. It is a function of pipeline velocity, conversion efficiency, and friction points. Motivation can temporarily increase activity, but unless you remove friction and codify winning plays, activity produces noise not revenue. Invest in playbooks that convert, automation that reduces handoffs, and a conversion-focused scorecard that sits on the leader's dashboard.

3. Pricing and promo elasticity as revenue engines

Small changes in price or promotion cadence, modeled and validated with regression, compound quickly. AI systems can surface elasticity patterns across channels and predict profit outcomes. This is where motivation is most destructive, because leaders love grand gestures like company-wide incentive weeks while ignoring the three percent margin erosion in a poorly structured promo program.

4. Retention and compounding LTV

Sustained revenue comes from retention, not rallies. Motivation increases churn by creating unsustainable effort expectations and by masking poor onboarding and product-fit issues. The right architecture replaces temporary motivational boosts with retention loops, cohort analysis, and NPS-linked improvement cycles that move lifetime value materially.

5. Capital flow and allocation

Where you spend time and money signals what you value. If 60 percent of culture spend goes to offsites and experiential hires, that is capital that could automate work, buy data, or shorten decision cycles. Reallocate low-return motivation budgets into tools and experiments that have a 3 to 15 percent ROI range, and measure the delta quarter to quarter.

Practical pathway, step by step

The decision to stop leaning on motivation is simple. The work is not. Here is an operator-level plan that replaces rallies with revenue architecture, and captures the upside the market now offers.

Step 0, baseline the damage

Run a Motivation Spend Audit across the last 12 months. Capture two numbers, total spend and measurable outcomes. Include offsites, external trainers, internal pep budgets, and the opportunity cost of hours spent in non-billable motivational activity. Compare that to potential tool investments and conservative ROI estimates. In sample sets, reallocation to AI market scans and dashboarding captures 3 to 15 percent more revenue, and reduces sales churn by up to 15 percent.

Step 1, stand up the AI-Market Scan

Implement the four-perspective scan weekly, covering company filings, analyst notes, journalist pieces, and expert transcripts. Feed outputs into a central knowledge layer. Target one immediate KPI, for example price gap identification, with a hypothesis like, "We can increase ASP by 5 percent in segment X without demand loss." Test, measure, iterate. This replaces guesswork with a predictable discovery cadence.

Step 2, rebuild the GTM dashboard

Create a cross-functional dashboard that tracks pipeline velocity, conversion by stage, churn by cohort, promo ROI, and pricing elasticity. Make it the single source of truth. Configure alerts that force action, not pep. If promo ROI drops below a threshold, the system throttles the next campaign until a control test runs. That is architecture, not exhortation.

Step 3, automate pricing and promo modeling

Build a regression-based pricing engine, even a simple one, that forecasts margin impact by channel. Start with three variables, price, promo depth, and acquisition channel. Run A B tests and fold results back into the model. Even conservative deployments return 3 to 10 percent in gross margin improvement within months.

Step 4, create a retention feedback loop

Instrument onboarding and early churn drivers with cohort analysis. Set retention targets tied to compensation, not just activity. Reward outcomes, not effort. This flips incentives away from short bursts of motivated effort to sustained, measurable performance.

Step 5, make hiring and comp structural, not inspirational

Your people systems must select for the wiring that performs inside the architecture. Use assessment and role-mapping to place Pipeline Developers where velocity matters, Conversion Specialists where closing rates matter, and Solutions Architects where complex sales need diagnosis. Compensate for activity only when that activity is correlated to outcomes in your data. If your best reps do not fit the playbook, change the playbook, or change the hire. Motivation is the cheap cover for mismatch.

Key trade-offs and pitfalls

Replacing motivation with systems is a change of focus, not a replacement of humanity. People still matter, but their energy must be channeled through design. Expect three transitions.

1. Short-term morale dip

Removing rituals will irritate some. That irritation is the difference between feel-good and future-proof. Communicate that you are reallocating resources to initiatives that will produce measurable rewards. Share the first wins quickly, even if they are small.

2. Measurement debt

Systems require clean data. Most firms will discover gaps, and cleaning them is tedious. Accept that as a capital expense. The payoff compounds.

3. Over-automation

Automation without human checks produces false certainty. Keep guardrails, human reviews for edge cases, and a rapid escalation path when models diverge from market reality.

Where motivation still belongs

There are moments for human leadership that have nothing to do with scaling mechanics. Crisis communication, honoring meaningful wins, and cultural rituals tied to identity are valid. The point is not to outlaw motivation, it is to stop using it as your primary scaling lever.

How to know you are winning

Replace subjective measures of "team energy" with hard signals. Examples:

— Pipeline velocity improves by at least 10 percent within two quarters after removing motivation-first initiatives and replacing them with process changes.

— Promo ROI increases by 3 to 10 percent after the first regression cycle.

— Sales churn falls by 10 to 15 percent within 6 to 12 months of moving budget from experiential spends to tools and retention programs.

— Forecast error tightens by 20 percent, improving capital allocation.

Final clarity

Motivation is not collateral damage, it is deliberate misallocation when used as a scaling strategy. It makes leaders feel active while revenue remains stochastic. The market rewards predictability. The architecture that wins in 2026 and beyond is built from AI-driven insight, repeatable plays, and compensation aligned to outcomes. If you want more revenue, stop trying to will it into existence. Engineer it.

Quarterly action

If you take nothing else, run a quarterly Revenue Architecture Audit. Score your organization across the five pillars provided here, allocate at least 30 percent of discretionary culture spend to systems and data, and publish the first dashboard to leadership within 60 days. That is how good operators stop depending on willpower and start compounding wealth.

Frequently Asked Questions

How do I quantify the opportunity cost of motivational spending in my company?

Run a Motivation Spend Audit across the last 12 months, capturing direct costs like offsites and trainers plus the opportunity cost of hours spent in non-billable motivational activity. Compare that total to conservative ROI scenarios for tool investments or AI scans, using a 3 to 15 percent revenue uplift range as your baseline. Present the delta to leadership as avoided churn and incremental revenue to make reallocation decisions tangible.

When is it appropriate to keep motivational programs instead of replacing them with systems?

Keep motivation for crisis communication, honoring significant cultural milestones, and identity-building rituals that do not consume scalable capital. For scaling levers tied to predictable revenue, replace rituals with systems that embed behavior into workflows. Communicate intent clearly and pair any retained motivation spend with measurable outcomes to avoid hidden cost.

How do I implement the weekly AI-Market Scan with limited technical resources?

Start with curated feeds from company filings, analyst notes, journalist pieces, and expert transcripts, then use inexpensive APIs or managed vendors to extract signals into a shared knowledge layer. Focus each week on one hypothesis driven KPI, like price gap identification, and automate simple alerts or tickets into your CRM or analytics stack. Iterate on signal quality, prioritize high-impact uses, and reassign low-value manual monitoring into the scan.

What are the minimum metrics my GTM dashboard must show to replace pep with predictability?

• Pipeline velocity

• Conversion rate by stage

• Churn by cohort

• Promo ROI

• Pricing elasticity indicator

Configure thresholds that trigger actions, not pep, for example throttle promotions when ROI falls below a set value until a control test runs. Make this dashboard the single source of truth and rationalize reports to reduce noise.

How do I build a regression-based pricing engine if I am not a data scientist?

Begin with a simple model using three variables, price, promo depth, and acquisition channel, running linear regressions in a spreadsheet or low-code tool to estimate short-run elasticity. Validate with small A/B tests and fold results back into the model, updating coefficients each cycle. Treat the model as decision support, not oracle, and use it to prioritize pricing experiments that move margin quickly.

What compensation changes force behaviors aligned to retention and LTV rather than short bursts of activity?

Move pay toward cohort-based outcomes and tied retention targets, for example bonuses based on 90 day retention or net revenue retention improvements. Reduce or remove activity-only incentives and make a portion of variable comp contingent on measurable customer lifetime value metrics. This reorients effort to sustained value creation rather than short-term peaks.

What measurement debt should I expect when shifting to a systems-first approach and how long will cleanup take?

• Missing stage timestamps

• Inconsistent channel attribution

• Lack of reliable cohort keys

These gaps block predictability and elastic modeling. Plan for a 1 to 3 quarter remediation window depending on scale, treating the work as capital investment rather than housekeeping. Prioritize fixes that unblock immediate experiments and visibility for leadership.

How do I prevent over-automation while still getting predictable revenue lifts?

Keep human-in-the-loop guardrails for edge cases, run canary tests before full rollouts, and define rapid escalation for model drift or market anomalies. Use automation to reduce friction but require human sign-off for outcomes that deviate materially from expectations. This preserves judgment where it matters and maintains trust in the system.

What ROI should I expect from reallocating culture spend into tools, analytics, and retention programs?

Conservative deployments typically return 3 to 15 percent in revenue uplift and can reduce sales churn by up to 15 percent within 6 to 12 months, depending on baseline maturity. Measure quarter over quarter and treat early wins as proof points to unlock additional capital. If you do not see measurable lift within the expected window, iterate on tool selection and experiment design rather than reverting to rituals.

What level of forecast error improvement validates that the architecture shift is working?

A tightening of forecast error by roughly 20 percent within two to four quarters is a strong signal that predictability has improved and capital allocation will be less risky. If you hit that target, increase investment in the levers producing the improvement, for example pricing models or retention loops. If not, diagnose data gaps and experiment quality before reverting to motivation-first tactics.

How do I restructure hiring and roles so people perform inside the revenue architecture without destroying culture?

• Pipeline Developers for velocity

• Conversion Specialists for close rates

• Solutions Architects for complex deals

Map roles to the functional needs of the architecture, then use assessments to validate fit. Run small pilots when changing comp or role expectations and share the data transparently to reduce fear. Communicate the why, show early wins, and protect rituals that genuinely bind the team while removing activities that substitute for structural fixes.

How do I run a Quarterly Revenue Architecture Audit step by step?

• Score the organization across the five pillars

• Quantify last quarter motivational spend and compare it to potential investments with conservative ROI estimates

• Stand up or update the GTM dashboard

• Execute one AI-market scan hypothesis

• Run at least one pricing or retention experiment with clear success criteria

• Publish results to leadership within 60 days

• Reallocate at least 30 percent of discretionary culture spend toward tools and experiments

Repeat each quarter with measured improvement targets.

Key Takeaways

• Stop using motivation as your scaling strategy, reallocate recurring culture spend to systems and data that produce predictable revenue uplifts in the 3 to 15 percent range.

• Make predictability the primary operating metric by instrumenting clean inputs, repeatable processes, and CRM micro-triggers so forecast error tightens and capital is allocated with confidence.

• Optimize throughput, not activity, by codifying winning plays, automating handoffs, and tracking conversion by stage so qualified opportunities move end to end faster.

• Treat pricing and promotions as engineered revenue engines, run regression-based elasticity tests and A B experiments, and expect 3 to 10 percent margin gains within months.

• Turn retention into a compounding lever by instrumenting onboarding and cohort analysis, tying compensation to outcomes, and reducing early churn by double digits.

• Make hiring and comp structural by mapping roles to revenue archetypes, assessing for fit against the playbook, and only incentivizing activity when it is proven to drive outcomes.

• Run a quarterly Revenue Architecture Audit across the five pillars, reallocate at least 30 percent of discretionary culture spend to tools and experiments, and publish a cross-functional dashboard to leadership within 60 days.