Short Answer

If your P&L shows widening operating expense with flat revenue, longer sales cycles, messy handoffs, or duplicated tools, you are adding complexity — not scaling.

True scale is revenue that multiplies relative to input: CAC falls, net retention rises, win rates improve, and driver-based forecast error sits under 5%.

Fix it fast:

• Run a 90-day surgical audit

• Inventory the stack

• Cut the lowest‑impact 20–30% of tools/processes

• Require a three‑month payback for any hire or tool

• Make the pipeline your single source of truth

If CAC and forecast error don’t measurably improve in 90 days, simplify more — scaling is compression of friction, not adding activity.

Most founders treat expansion like a list

Most founders treat expansion like a list. Add a tool. Hire three reps. Build a marketing funnel. Repeat. It feels like progress. It rarely is.

In 2026, the market rewards one thing above all, speed with margin. AI automation, usage-based pricing, and tighter capital markets mean mistakes compound faster. The common error I see at scale is not a bad strategy. It is an architecture problem. Teams, tools, and processes get layered on top of one another until revenue slips through the cracks. Profitability erodes. Forecasts wobble. Growth plateaus.

If your P&L shows widening operating expense and flat revenue growth, you are likely adding complexity, not scaling. True scale is not larger. It is more efficient. It is revenue that multiplies relative to input, not revenue that follows input. That distinction changes every decision you make about people, tech, and strategy.

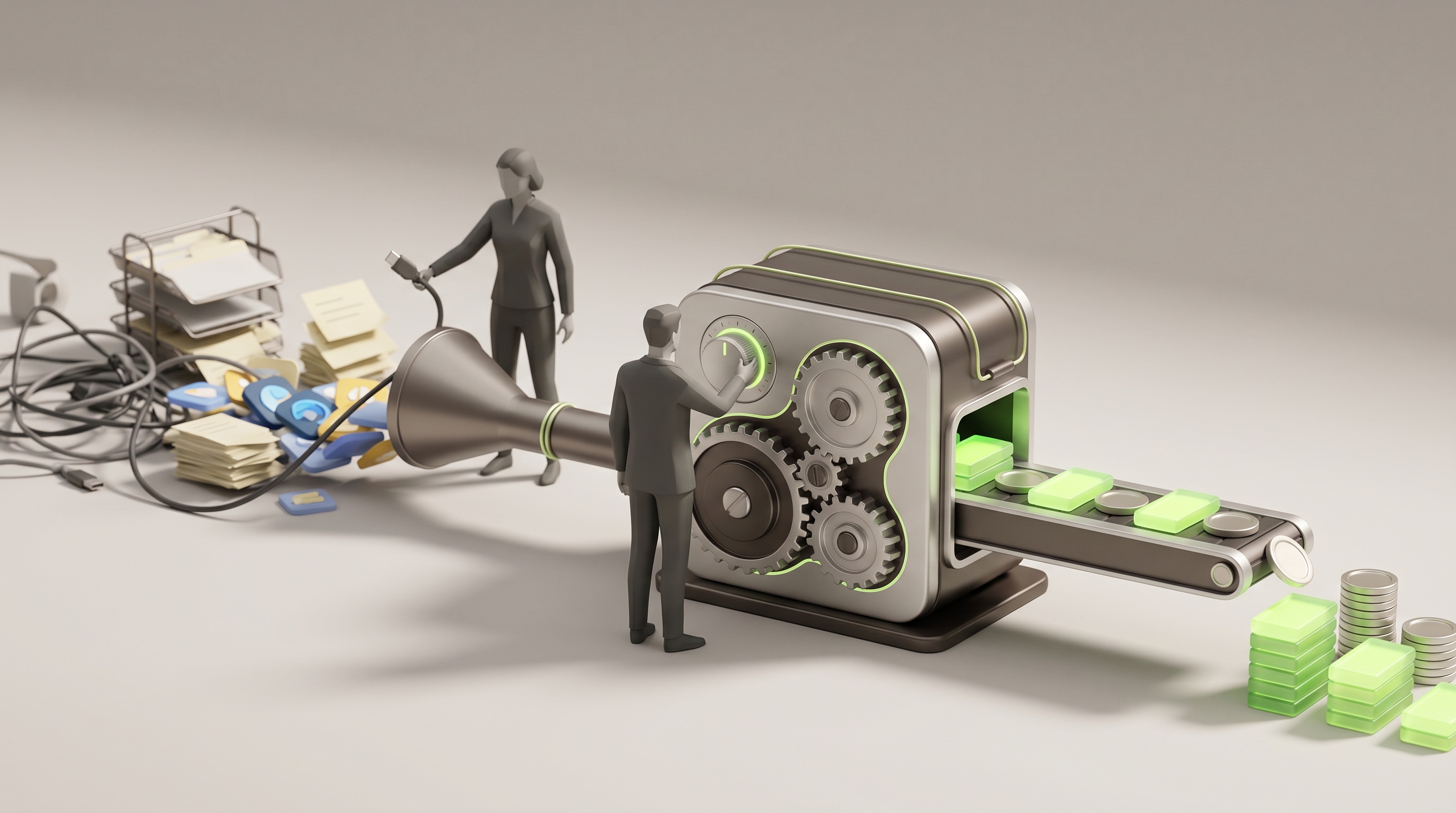

The thesis is simple. Stop treating growth as activity. Design revenue as a machine. Identify the smallest, highest-leverage constraints, remove them, and build systems that compound. When you do that, a 20 to 30 percent cut in cost of customer acquisition becomes a recurring source of profit, not a one-off saving.

How complexity kills revenue

Complexity is stealthy. It shows up as longer sales cycles, lower renewal rates, messy handoffs, duplicated tools, and forecasts that miss by double digits. Two predictable patterns repeat across companies that confuse scale with complexity.

1. Tech sprawl

Teams adopt specialist tools to solve local problems. Over time the stack fragments. Data lives in silos. Integration costs explode. The result is slower pipeline movement and higher CAC. You pay for automation, and you lose speed.

2. Headcount dilution

Hiring is easier than redesigning work. Instead of making each rep more productive, leaders add bodies to cover operational gaps. Headcount grows while per-rep throughput falls. Your payroll increases, and your output does not scale.

Both patterns inflate apparent capacity while reducing velocity. A 25 to 40 percent increase in CAC, a 20 to 30 percent erosion of margins, and forecast variance that shakes investor confidence are common outcomes.

A practical framework for real scaling

I use five pillars to determine whether a business is scaling or simply becoming more complex. Each pillar is a decision surface. The CEO or operator must make a binary choice. Keep it, or cut it. Reallocate, or retire it.

1. Revenue Base Management

Why it matters: Revenue is not a flow to be chased. It is a base to be cultivated. High performers treat current customers as the most reliable source of compounded revenue.

What to do: Map your base by renewal propensity, margin, and competitive risk. Prioritize segments with at least twice the renewal rate of your average customer. Build retention plays that are playbooked, measurable, and automated where possible.

Metrics: Renewal rate by cohort, net revenue retention, churn drivers, time to renewal intervention.

2. Pipeline as Revenue OS

Why it matters: The pipeline is not a CRM artifact. It is the operating system for revenue decisions. If conversion, win/loss, and lead velocity do not predictably produce quota, you do not have a revenue system. You have a ledger.

What to do: Reduce KPIs to a single source of truth. Standardize opportunity stages and conversion rules. Recarve territories and compensation based on conversion data, not tenure or desk politics.

Metrics: Stage conversion rates, sales cycle length, pipeline coverage ratio, time in stage, win rate by rep and segment.

3. Driver-Based Forecasting

Why it matters: Forecasts that are opinions create reactive management. Driver-based models force causality. They reveal where money is stuck.

What to do: Build a weekly variance model that tracks inflows, outflows, and renewals. Set a target forecast error of under 5 percent. Run sensitivity analysis for pricing, promotion, and funnel changes. Treat the forecast like a control panel, not an estimate.

Metrics: Forecast error, variance by driver, sensitivity to price and volume changes.

4. People, Incentives, and Structure

Why it matters: People do what they are incentivized to do. Misaligned comp plans and fuzzy role definitions create redundancy and margin leakage.

What to do: Reclassify roles by archetype. Map sales behaviors to outcomes. Pay for the outcomes that move velocity, not for activity. When hiring, require a payback plan, not a hope.

Metrics: Revenue per rep, payback period per hire, conversion by role archetype, overlap in territories.

5. AI and Systems as Orchestration, Not Complexity

Why it matters: AI is a force multiplier when used to remove work and focus decisions. It is pernicious when it creates another layer to manage.

What to do: Automate decision workflows, not dashboards. Integrate analytics so they feed one version of truth. Automate routine actions that reduce friction in renewal and cross-sell flows.

Metrics: Percent of ops automated, reduction in handoffs, speed to value for AI-enabled processes.

Three ruthless decision rules

Decisions need guardrails. Without them, leaders drift back to adding complexity. Use these rules to separate true investments from noise.

1. The Payback Rule

Any new hire, tool, or GTM initiative must pass a payback threshold within three months or be shelved. Faster payback forces you to prioritize velocity over vanity.

2. The Forecast Rule

If your weekly driver-based forecast error exceeds 5 percent for two consecutive quarters, restructure your revenue model. Small errors compound into strategic failure.

3. The Minimal Stack Rule

Every tool must demonstrate a clear delta in CAC reduction or pipeline velocity. If a tool cannot be tied to either within 90 days, remove it. No exceptions.

A short arithmetic example that matters

Starting point: Annual revenue 100 million, CAC 20, LTV:CAC 3:1, operating margin 18 percent.

Interventions: Cut tech sprawl and duplicate tools reducing CAC by 20 percent. Improve renewal programs to lift net retention by 10 percent. Reassign territories and comp to increase win rate by 15 percent.

Result: Effective CAC falls to 16. LTV rises because renewals increase. LTV:CAC moves from 3:1 to approximately 4.2:1. Revenue grows with the same headcount, and operating margin expands by 6 to 9 percentage points depending on gross margin. Those are not small numbers. They are levers that multiply value.

When to add complexity

Adding a tool, headcount, or new channel is not automatically wrong. It becomes wrong when it is the default. Add complexity only when three conditions are met.

1. You have resolved existing constraints in the five pillars. No amount of new software will fix a bad comp plan.

2. The initiative passes the Payback Rule and a 3x ROI hurdle over a defined period.

3. You can instrument the change with driver-based metrics before full rollout, so you can stop fast if it fails.

A practical 90-day playbook

If you are the CEO, run this sequence. If you are the head of revenue, make the CEO sign off on it.

Days 1 to 14, map

Create a full inventory of tools, costs, and owners. Map the revenue stack from lead touch to renewal. Tag every element as revenue-critical, support, or redundant.

Days 15 to 30, measure

Tie each tool and role to a revenue metric. Calculate CAC per channel and revenue per rep. Build the initial driver-based forecast with inflows, conversion, and outflows.

Days 31 to 60, act

Remove the lowest-impact 20 to 30 percent of tools and processes. Reallocate budget to pipeline acceleration plays that have proven payback within three months.

Days 61 to 90, optimize

Recarve territories and adjust comp to reflect conversion data. Start one pricing experiment with a small, comparable cohort. Automate renewal workflows using a single analytics source of truth.

By day 90, expect a measurable reduction in CAC and a visible tightening of forecast error. If you do not see that, you cut more.

The non-obvious leverage few leaders use

1. Treat the pipeline as an instrument, not data. Run it weekly. Make it the basis for sales huddles, not a monthly review.

2. Use competitive wiring in hiring and role alignment. Matching rep archetype to market context reduces deal kill errors.

3. Phase complexity out monthly. Pick one area each month to simplify. Complexity compounds like interest. Simplifying compounds value the same way.

Leadership trade-offs you must accept

Simplification creates short-term pain. You will have people, tools, and habits that were comfortable, and you will remove them. That is intentional. The alternative is a slower death by attrition, where an expanding cost base kills optionality.

You will need to be decisive about people and culture. That does not mean punitive action. It means clarity of expectation and a single operating truth that everyone must align to. Fuzzy incentives will always beat execution.

Final clarity

Scaling is not a multiplication of inputs. It is a compression of friction. If your business looks larger but moves slower, you are adding complexity, not scale. The corrective path is surgical. Audit your stack. Measure causality. Rebuild incentives. Treat pipeline metrics as your operating system. Use AI to automate decisions, not to justify another dashboard.

Start with one question at your weekly leadership meeting, and hold the room to the answer. How did our actions this week increase revenue velocity, not just activity? If you cannot answer that with a number, you are still adding complexity.

Scale happens when decisions stop being messy. When the machine hums, the numbers change. Design the machine.

Frequently Asked Questions

Question: How can I tell if my company is actually scaling or just adding complexity?

Answer: Look for velocity, not vanity metrics. If CAC and operating expense are rising while revenue growth stalls, forecast error widens beyond 5 percent, renewal rates drop, or revenue per rep declines, you’re likely adding complexity. Run a 30-day inventory of tools, roles, and handoffs and measure their direct contribution to pipeline velocity and CAC to confirm.

Question: What should a CEO do in the first 90 days to stop complexity from compounding?

Answer: Follow a three-phase sequence: map (days 1–14) every tool, process, owner and tag revenue-critical items; measure (days 15–30) by tying tools and roles to CAC and revenue-per-rep; act and optimize (days 31–90) by cutting the lowest-impact 20–30 percent of tools/processes, reallocating spend to plays with 3-month payback, recarving territories and running one pricing test. Expect measurable CAC reduction and tighter forecast variance by day 90 or double down on cuts.

Question: How do I quantify and prioritize tech sprawl in a way that leads to revenue decisions?

Answer: Inventory every tool with cost, owner, integration hours, and the metric it affects (CAC, conversion, NRR). Calculate the delta in CAC or pipeline velocity attributable to each tool within a 90-day test window; deprioritize or retire tools that cannot demonstrate a measurable CAC reduction or velocity gain. Prioritize consolidations that remove handoffs and centralize a single source of truth.

Question: When is it the right time to hire additional sales reps versus optimizing the existing team?

Answer: Require a payback plan for every hire: if a new headcount cannot show a path to payback within three months (per your Payback Rule), optimize first. Reclassify roles by archetype, measure revenue-per-rep and conversion by archetype, then hire only into proven gaps where increased capacity directly increases pipeline velocity and short-term payback.

Question: What’s the simplest way to apply driver-based forecasting in a scaling company?

Answer: Build a weekly model that tracks inflows (leads), conversion rates by stage, outflows (lost deals and churn), and renewals as drivers, and use that model to produce a single forecast number. Track weekly variance by driver, target a forecast error under 5 percent, and run sensitivity tests on price and volume so the forecast becomes a control panel for decisions rather than an opinion.

Question: How should I redesign compensation and structure to prioritize velocity over activity?

Answer: Pay for outcomes that move funnel velocity: conversion, time-in-stage reduction and revenue per rep, not call counts or activity. Map behaviors to outcomes, eliminate overlap in territories, and require a payback plan for hires; expect short-term churn in behaviors but long-term gains in margin and forecast reliability.

Question: How do I use AI without creating another layer of complexity?

Answer: Automate decision workflows — e.g., lead triage, renewal triggers, next-best-action — and integrate outputs into one analytics source of truth, not separate dashboards. Measure AI by reduction in handoffs and speed-to-value for automated processes, and stop any AI pilot that doesn’t improve CAC or pipeline velocity within the agreed test window.

Question: What metrics should I watch to prove simplification is working within 90 days?

Answer: Track CAC, forecast error, net revenue retention, revenue per rep, win rate, and time-in-stage. A meaningful signal is a 15–25 percent improvement in at least two of these (CAC reduction or win-rate lift plus reduced forecast error) within the 90-day window; if you don’t see it, escalate cuts.

Question: What are the biggest trade-offs when cutting tools and people, and how do I mitigate them?

Answer: Expect short-term productivity drops and loss of tribal knowledge; the trade-off is necessary to remove hidden drag on velocity. Mitigate by phasing removals, documenting processes, assigning owners to retained systems, and reallocating saved budget to high-velocity plays so rescue capacity exists for urgent failures.

Question: How do I prioritize which constraint to remove first for highest leverage?

Answer: Use an impact-versus-effort filter focused on revenue velocity: pick constraints that block many deals (messy handoffs, slow approvals, or low renewal cohorts) and can be fixed in 30–60 days. Fixing an end-to-end bottleneck that shortens cycle time or raises renewal propensity typically delivers outsized CAC and margin improvements.

Question: How should I structure a pricing experiment so it’s decision-ready within 90 days?

Answer: Run pricing changes on a small, comparable cohort with randomized assignment, instrument the test with driver-based metrics (conversion, deal size, churn risk) and set stop-loss thresholds. Analyze sensitivity to price and volume, project LTV:CAC impacts, and only roll out if it meets your payback and forecast-stability criteria.

Question: How do I know when adding a new tool, channel, or initiative is justified?

Answer: Only add complexity when three conditions are met: you’ve resolved constraints across the five pillars, the initiative passes the three-month Payback Rule and a 3x ROI hurdle over a defined period, and you can instrument the change with driver-based metrics to stop fast if it underperforms. If any of those are missing, the default should be simplify, not expand.

Key Takeaways

• Treat revenue as a cultivated base, not a flow to chase; segment for ≥2x renewal propensity and automate measurable retention plays to compound LTV.

• Make the pipeline your Revenue OS: one source of truth, standardized stages, and territory/comp decisions driven by conversion data, not tenure.

• Switch to weekly driver-based forecasting with a <5% error target and use sensitivity analysis as a control panel to reveal where money is stuck.

• Enforce a 90‑day Payback Rule for every hire, tool, or GTM initiative and kill anything that can't demonstrably reduce CAC or increase pipeline velocity.

• Eliminate tech sprawl and headcount dilution by cutting the lowest-impact 20–30% of tools/processes and reallocating spend to plays with proven three‑month payback.

• Deploy AI to automate decision workflows and reduce handoffs—integrate analytics into one version of truth, not another dashboard to manage.

• Tie roles to archetypes and pay for outcomes, not activity; require a payback plan for hires so incentives compress friction and multiply margin.